|

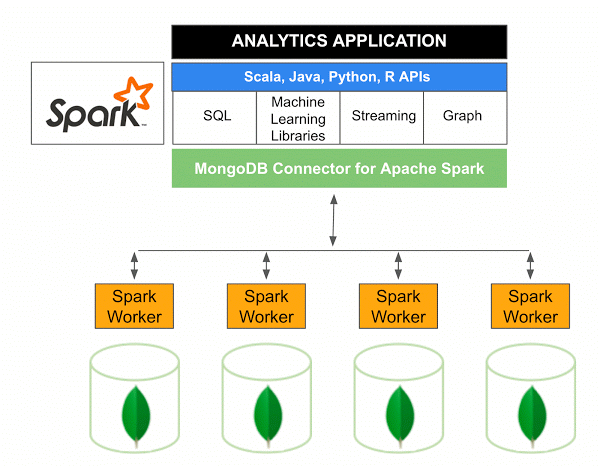

To read the data frame, we will use the read() method through the URL. Here we are going to read the data table from the MongoDB database and create the DataFrames. Note: we need to specify the mongo spark connector which is suitable for your spark version. config('', ':mongo-spark-connector_2.12:3.0.1') \Īs shown in the above code, If you specified the and configuration options when you started pyspark, the default SparkSession object uses them. In this scenario, we are going to import the pyspark and pyspark SQL modules and create a spark session as below :

Here we have a table or collection of books in the dezyre database, as shown below. Here in this scenario, we will read the data from the MongoDB database table as shown below.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed